|

12/13/2023 0 Comments Openzfs mountpoint legacy fstab

I was able to fix the problem by wiping the first few gigs of the drive, then recreating everything I also tried recreating the GPT partition table on the drives using gparted, no luck. Maybe this had something to do with it? I was able to create a pool with the drives as vdevs just fine but re-importing them (even using /dev/disk/by-id) did not work in my case. The particular disks I was using were cannibalized from an old HP ProLiant server with smart array. I never observed anything being output to the cache file. I experienced a very similar issue (maybe identical). So, we either can add new command to zpool utility to resync SPA from disk, should boot sequence ever need this, then add it to initrd scripts for zfs appropriate command, or extend spa_write_cachefile function to check on write, whether root system just has been mounted, and if this is the case, first resync to in-core any pools from cachefile residing on rpool, provided pools are non-stalled. IMHO best approach would be to give initrd scripts possibility to do sync on it's own. With this approach, if zpool.cache is present, it will survive bpool load, and will be taken into account with rvice, which essentially first loads cache config in-core and then it re-synced back with updated txn ids.Īs for permanent solution and pull request, i'm a bit lost here, because there is an open ticket for retiring the cache. I have to run zfs mount -a to get them to actually mount after every boot.Ĭan I add zfs mount -a somewhere into that service for it to run automatically?ĮxecStartPre=/bin/sh -c ' & mv /etc/zfs/zpool.cache /etc/zfs/preboot_zpool.cache || true'ĮxecStart=/sbin/zpool import -N -o cachefile=none bpoolĮxecStartPost=/bin/sh -c ' & mv /etc/zfs/preboot_zpool.cache /etc/zfs/zpool.cache || true' zfs list shows the relevant datasets but they are missing from a df -h and not viewable. Your workaround kind of works but although my additional pools are imported, it does not appear to be mounting the datasets correctly. #ConditionPathExists=!/etc/zfs/zpool.cacheĮxecStart=/sbin/zpool import -aN -o cachefile=none $ sudo vim /lib/systemd/system/rviceĭescription=Import ZFS pools by device scanning

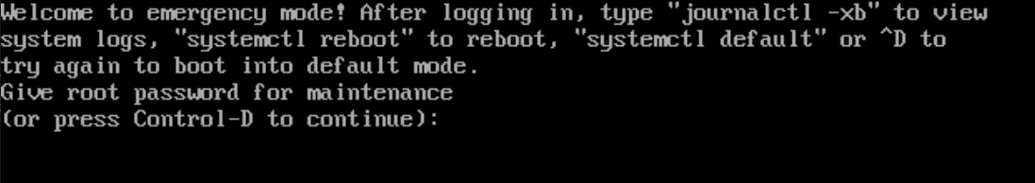

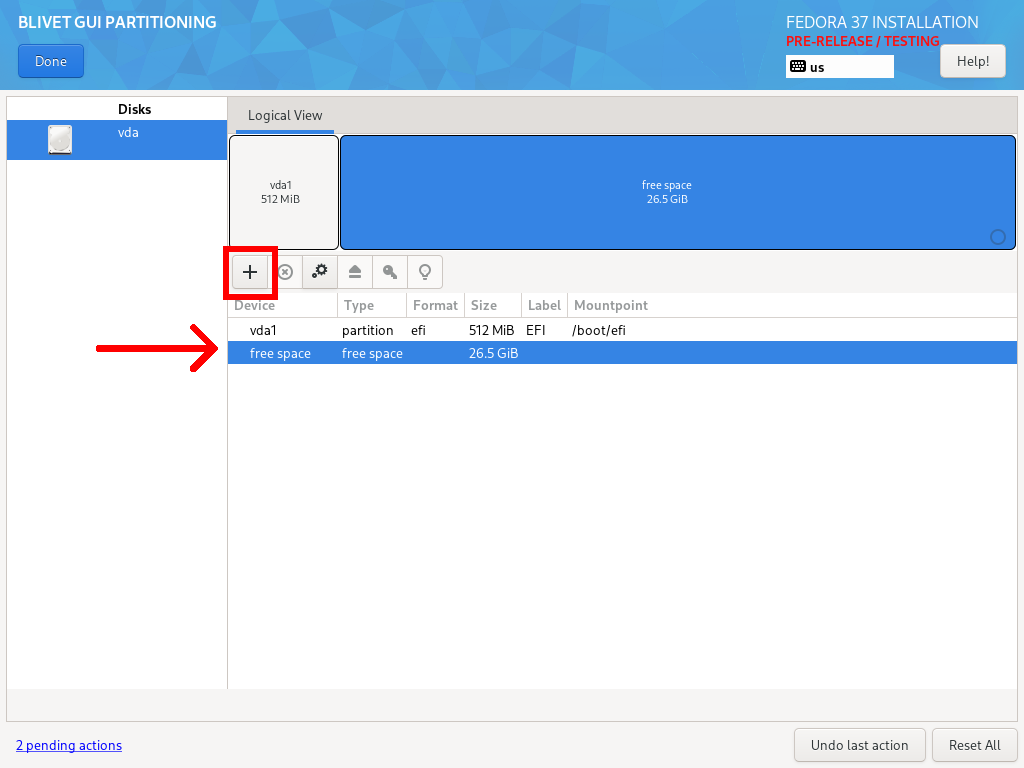

I can't figure out why, but I wonder if when the bpool is imported if it is causing the cache file to be cleared. However, after I restart, but before I manually import my array pool the cache file is back to what it was. Nothing shows up in syslog indicating an error.īeta Was this translation helpful? Give feedback. Tried running update-initramfs -u -k all after the pool had been imported to no avail.Tried adding a second systemd service per section 4.10 of.Tried adding /etc/modprobe.d/zfs.conf containing "zfs_autoimport_disable=0".Tried updating /etc/zfs/zpool.cache by running "zpool set cachefile=/etc/zfs/zpool.cache sdb".Tried setting mountpoint=legacy and updating fstab and that fails, forcing an unclean boot into maintenance mode that requires running "zpool import -a" to continue.system does not mount the new sdb/newfs on boot or see the zpool.set a mountpoint with: zfs set mountpoint=/path/to/mountpoint/ sdb/newfs.add ZFS to zpool sdb with "zfs create sdb/newfs".Add a second drive /dev/sdb and add zpool with "zpool create -f sdb sdb".

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed